This is where angels and pinheads emerge I’m afraid. Is http semantics to be taken directly as REST semantics? Http has no problem using put for a creation while indicating the id to be created, but my understanding of REST favoured POST for that.

I have to repeat though, I don’t think it is a good approach overloading the REST api for bulk transfer semantics, but having said that, if we’re talking about setting the identifier of a resource on the caller side, I’d be inclined to use POST with the id included in the resource contents, which is what uid gives us. That is slightly better then PUT in my humble opinion because it stick to create semantics of POST rather than resorting to PUT, which is acceptable but not as obvious as POST, but as I said, feels like angels and pinheads to me. Also in PUT scenario we have to make sure the id in the URL and the one in the body match, which is more moving parts then I like.

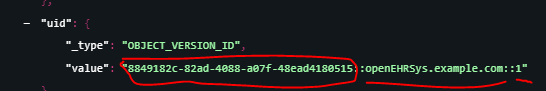

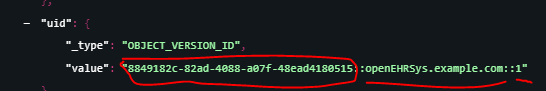

The real question I have with setting the ids is: what about versioning? This pops up every now and then and there are discussions about this somewhere here: the uid field is set to a type relatively higher up in the identifier type hierarchy from RM, but in reality it is a more concrete type, set by a convention of Ocean’s first use and everybody else following track (vendor see, vendor do) As in:

First of all, how will we deal with system id and version when pushing a composition from another CDR via REST? if we use the object_version_id then I remember : not being allowed on some frameworks for being a reserved character in an URL (not a problem in POST). Then comes the versioning. What would the server do if you want to replicate the history of a composition (all versions) and you push v1 after you push v2? Assume the DB timed out under heavy write load while trying to grow the db file size (yes, it happens) and v1 insert failed but v2 worked. Now you have some missing history which you have to fix. Then there’s other invariants such as the contribution and folders as @stefanspiska mentioned.

Since the id of the resource is overloaded in reality with more information than you can represent with REST’s built in constructs as is the case here, we’ll have to define a lot of situations with headers at the minimum. As in server behaviour when the above out of order insert happens.

I’m not writing all this to be purist or pedantic. Maybe I’m missing the point but all of the above is pointing at REST just not having the semantic bandwidth IMHO.

Well, I’m afraid I’m under the opinion that it actually is not easy. I give @thomas.beale a lot of grief when it comes to (over)pragmatism on my part but I don’t think this is a case you can cut corners: you in bold because then it’ll become "this is how it’s done in openEHR "because Ian McNicoll did it that way. Same goes for other highly visible personas of openEHR btw.

There’s valuable work and lessons to take from FHIR here, who went with some file exports for bulk data transfer if my memory is correct.

I hope I could make your day once again Ian